Optimizing Page Loading in the Web Browser

It is well understood that page loading speed in a web browser is limited by the available connection bandwidth. However, it turns out bandwidth is not the only limiting factor and in many cases it is not even the most important one.

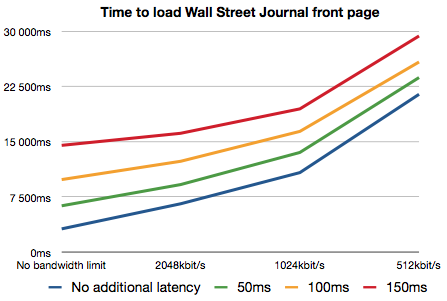

The graph above shows the time it took to fully load the Wall Street Journal front page (chosen for its complexity which well represents many modern web sites) with a recent WebKit. Browser caches were cleared before each page load. The Mac OS X dummynet facility was used to simulate various network conditions by introducing packet latency and capping the available bandwidth. Since the testing was done against a live web site the actual connection latency was a factor as well (ping time to wsj.com was ~75ms).

From the figure it is clear that while available bandwidth is a significant factor, so is the connection latency. Introducing just 50ms of additional latency doubled the page loading time in the high bandwidth case (from ~3200ms to ~6300ms).

Latency is a significant real world problem. Wireless networking technologies often have inherently high latencies. Packet loss and retransmits due to interference makes the situation worse. Geographical distance introduces latency. Just the roundtrip delay between US East and West Coast is somewhere around 70ms. Loaded web servers may not respond immediately.

Why does latency have such a huge impact on page loading speed? After all, to load a page completely a web browser just needs to fetch the page source and all the associated resources. The browser makes multiple connections to servers and tries to load as many resources in parallel as possible. Why would it matter much if it takes slightly longer to start loading an individual resource? Other resources should be loading during that time and the available bandwidth should still get fully utilized.

It turns out that figuring out “all the associated resources” is the hard part of the problem. The browser does not know what resources it should load until it has completely parsed the document. When the browser first receives the HTML text of the document it feeds it to the parser. The parser builds a DOM tree out of the document. When the browser sees an element like <img> that references an external resource, it requests that resources from the network.

Problems start when a document contains references to external scripts. Any script can call document.write(). Parsing can’t proceed before the script is fully loaded and executed and any document.write() output has been inserted into the document text. Since parsing is not proceeding while the script is being loaded no further requests for other resources are made either. This quickly leads to a situation where the script is the only resource loading and connection parallelism does not get exploited at all. A series of script tags essentially loads serially, hugely amplifying the effect of latency.

The situation is made worse by scripts that load additional resources. Since those resources are not known before the script is executed it is critical to load scripts as quickly as possible. The worst case is a script that load more scripts (by using document.write() to write <script> tags), a common pattern in Javascript frameworks and ad scripts.

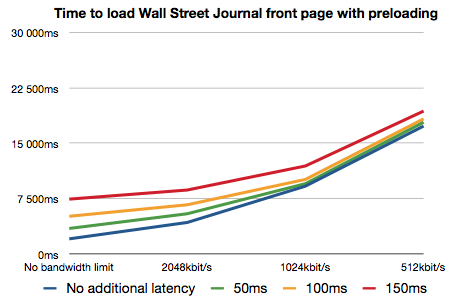

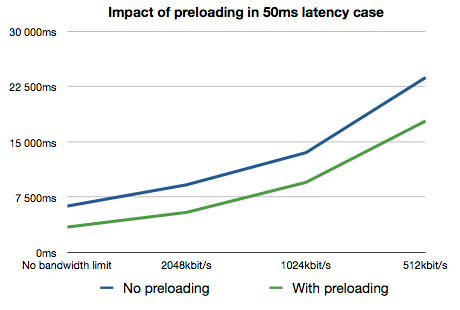

The latest WebKit nightlies contain some new optimizations to reduce the impact of network latency. When script loading halts the main parser, we start up a side parser that goes through the rest of the HTML source to find more resources to load. We also prioritize resources so that scripts and stylesheets load before images. The overall effect is that we are now able to load more resources in parallel with scripts, including other scripts.

You can see from the graphs above that these optimizations significantly reduce the impact of network latency and generally improve page loading speed. For example with 50ms of simulated latency and no bandwidth limit, the overall page loading time was 2.8s faster (6.3s to 3.5s). With bandwidth capped to 512kbit/s the improvement was 5.9s (23.8s to 17.9s).